The quest for an unbiased scientific impact indicator remains open

- Giacomo Vaccario , Shuqi Xu , Manuel S Mariani , Matúš Medo

- Science of science

- October 8, 2024 Official Link

A study published in the journal PNAS questioned the unbiasedness of a scientific impact indicator called ̂c10, which was previously thought to be fair. The analysis shows that ̂c10 exhibits a strong age bias, meaning it favors older papers over newer ones. This bias is due to ̂c10's tendency to rank older papers higher than younger ones. The study suggests that this bias could be a problem for scientists who rely on ̂c10 to evaluate the impact of their research. Furthermore, the study found that raw citation count c outperforms ̂c10 in identifying groundbreaking research, because it is not controlled for temporal distribution. This calls into question the fairness of ̂c10 and highlights the need for more research into algorithmic bias and fairness in scientometrics. The authors of the study suggest that developing unbiased indicators of scientific impact remains a pressing question in the scientometrics and science of science communities.

The idea of using network-based mechanisms to prevent biases is compelling, yet ̂c10 is not unbiased.

Why This Matters for Scientists

For scientists, the findings of this study are significant because they question the fairness of a widely used scientific impact indicator.

Quick Technical Overview

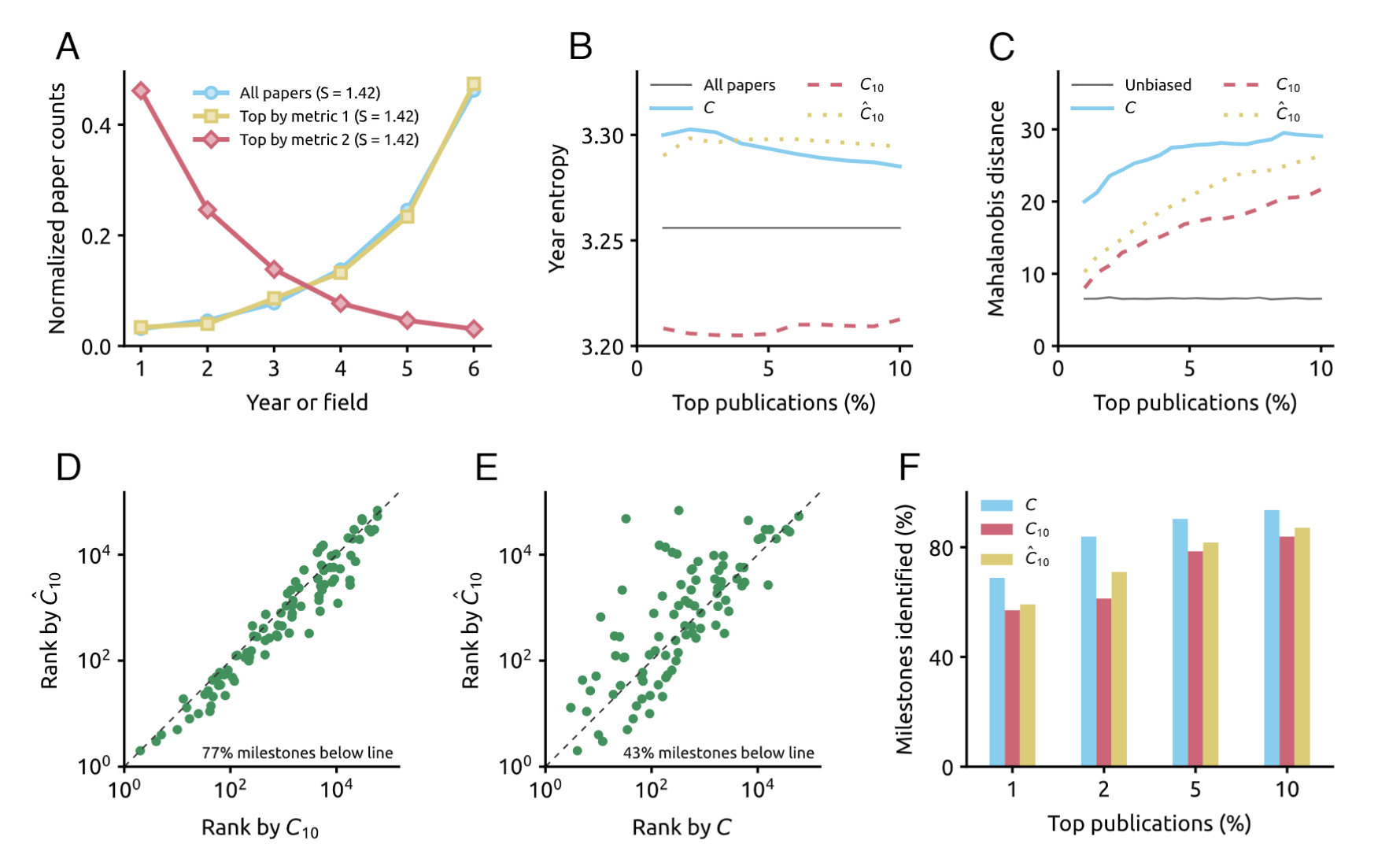

The study tested the bias of ̂c10 against the distribution of all papers in the American Physical Society (APS) citation dataset. The authors found that ̂c10 exhibits a strong age bias, which means it favors older papers over newer ones. This bias is due to ̂c10's tendency to rank older papers higher than younger ones. The novelty of this work lies in its systematic comparison of ̂c10 to other metrics, such as raw citation count c, and its replication of the findings in a large dataset.

We find that this is due to ̂c10's bias toward older papers, already evident in figure 4C in ref. 3.

Summary for Policy Makers

The study's findings have implications for the development of scientific impact indicators that are fair and unbiased. The authors suggest that developing unbiased indicators of scientific impact remains a pressing question in the scientometrics and science of science communities. The study's results highlight the need for more research into algorithmic bias and fairness in scientometrics. Furthermore, the study's findings have implications for the evaluation of research and the identification of groundbreaking papers. The authors suggest that raw citation count c outperforms ̂c10 in identifying groundbreaking research, because it is not controlled for temporal distribution.

In sum, the idea of using network-based mechanisms to prevent biases is compelling, yet ̂c10 is not unbiased.

Disclaimer

The above summaries were generated with the assistance of an AI system.

Abstract

Developing unbiased indicators of scientific impact has long been a central question in the scientometrics and science of science communities (1, 2). Ke et al. (3) recently tackled the ambitious challenge of developing a paper-level network-based indicator that can be fairly compared across time and fields even without the need for a field classification system, concluding that their proposed c10 achieves this objective. The idea of leveraging a network-based mechanism to prevent impact indicator bias provides a compelling perspective to the long-standing debate on indicator bias, which could inspire many future works. Unfortunately, the validation performed in the paper does not properly test for bias, nor does it test properly for the indicator's ability to detect groundbreaking research.