The Robotic Herd: Using Human-Bot Interactions to Explore Irrational Herding

- Luca Verginer , Giacomo Vaccario , Piero Ronzani

- Cooperation and opinion dynamics , Game theory

- September 21, 2023 Official Link

A study of 1,997 participants playing a minority game with bots found that 30% followed the majority, despite theoretical expectations of no herding. The study explored how humans interact with automated entities and how their behavior changes when they are aware of interacting with bots. Herding behavior persisted even when participants were aware of interacting with automated entities.

Herding can be rational when the group has better information than the individual (Couzin et al., 2005).

Why This Matters for Scientists

You may want to consider the implications of this study for your own research on human behavior and decision-making. The findings suggest that herding behavior is a persistent phenomenon that can occur even when individuals are aware of interacting with automated entities.

Quick Technical Overview

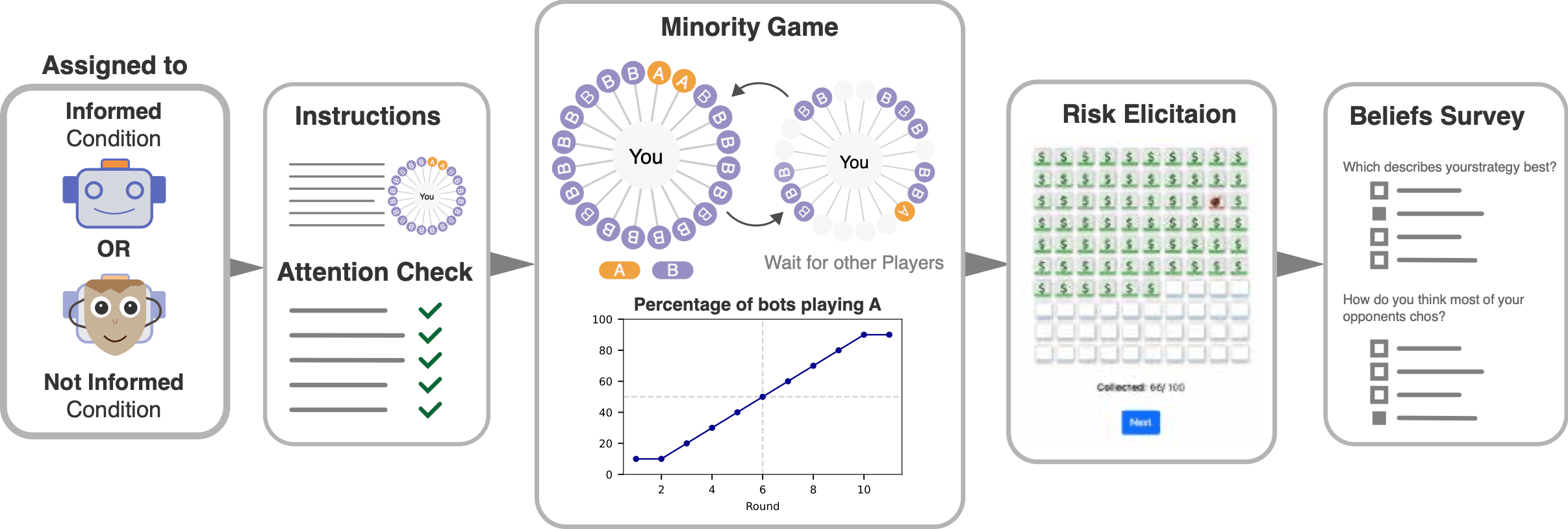

The study used a minority game with bots to investigate how group behavior influences individual choices. Participants were randomly assigned to either the informed condition (opponents referred to as "Bots") or the not informed condition (opponents referred to as "humans"). The novelty of this study lies in its use of scripted automated players to investigate cooperative herding behavior.

Using bots to study prosocial behaviour is an "interesting yet underutilized resource" (Nielsen et al., 2022).

Summary for Policy Makers

The study has profound implications for understanding human behavior on digital platforms where interactions with bots are common. Herding behavior can lead to irrational decisions and has significant consequences for financial markets and other areas. The findings suggest that herding is a persistent phenomenon that can occur even when individuals are aware of interacting with automated entities.

This study underscores the persistence of herding behavior in human decision-making, even when participants are aware of interacting with automated entities (bots).

Disclaimer

The above summaries were generated with the assistance of an AI system.

Abstract

We explore human herding in a strategic setting where humans interact with automated entities (bots) and study the shift in the behavior and beliefs of humans when they are aware of interacting with bots. The strategic setting is an online minority game, where 1,997 participants are rewarded for following the minority strategy. This setting permits distinguishing between irrational herding and rational self-interest—a fundamental challenge in understanding herding in strategic contexts. Moreover, participants were divided into two groups: one informed of playing against bots (informed condition) and the other unaware (uninformed condition). Our findings revealed that while informed participants adjusted their beliefs about bots' behavior, their actual decisions remained largely unaffected. In both conditions, 30% of participants followed the majority, contrary to theoretical expectations of no herding. This study underscores the persistence of herding behavior in human decision-making, even when participants are aware of interacting with automated entities. The insights provide profound implications for understanding human behavior on digital platforms where interactions with bots are common.