When standard network measures fail to rank journals: A theoretical and empirical analysis

- Giacomo Vaccario , Luca Verginer

- Science of science , Network theory , Data science

- December 12, 2022 Official Link

Abstract

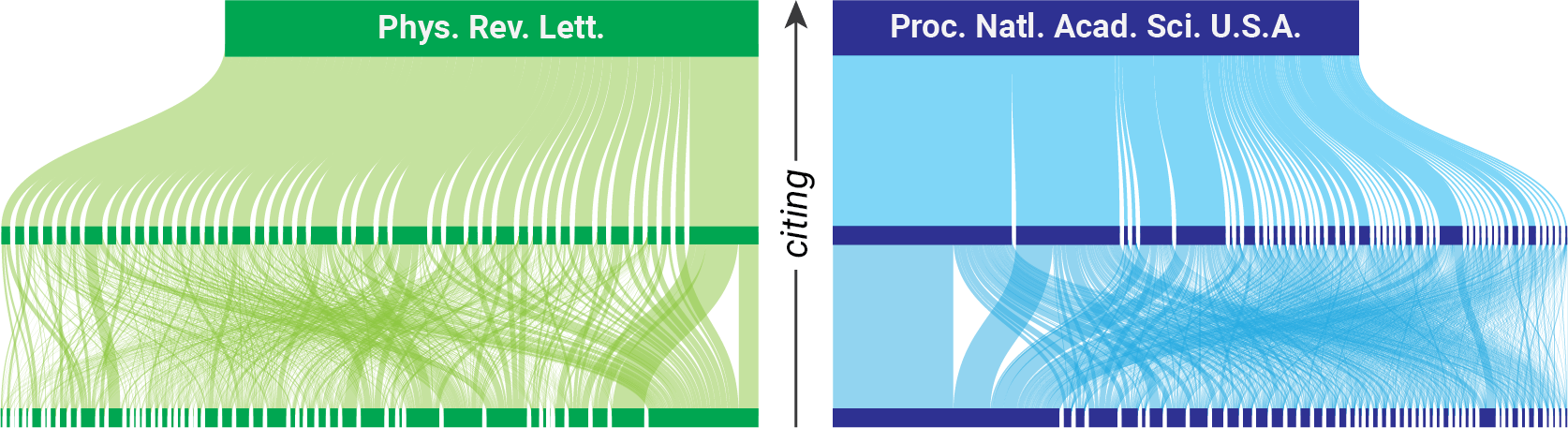

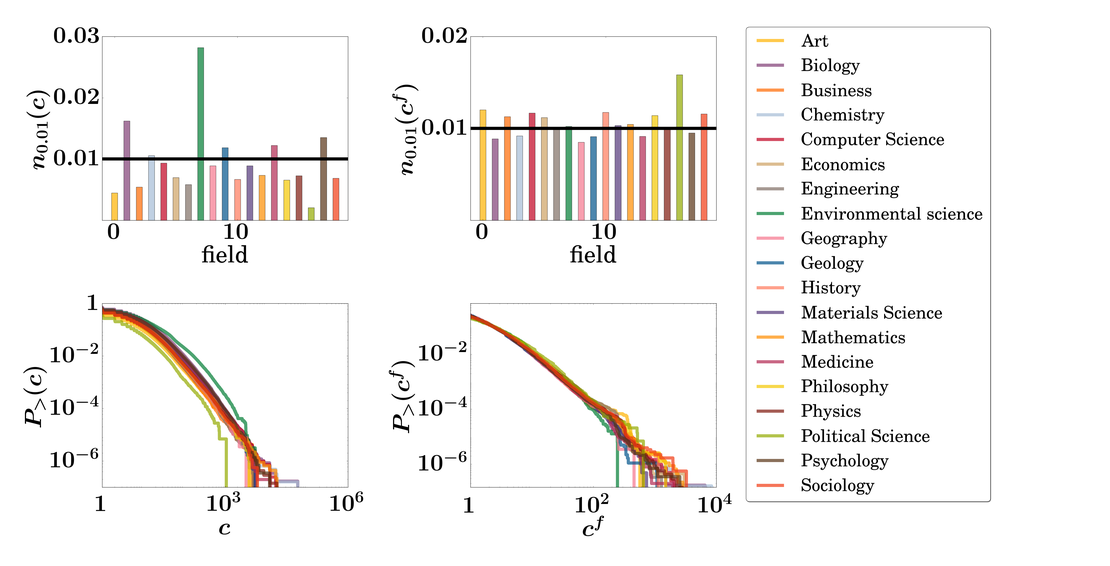

urnal rankings are widely used and are often based on citation data in combination with a network approach. We argue that some of these network-based rankings can produce misleading results. From a theoretical point of view, we show that the standard network modelling approach of citation data at the journal level (i.e., the projection of paper citations onto journals) introduces fictitious relations among journals. To overcome this problem, we propose a citation path approach, and empirically show that rankings based on the network and the citation path approach are very different. Specifically we use MEDLINE, the largest open-access bibliometric data set listing 24 135 Journals, 26 759 399 papers, and 323 356 788 citations. We focus on PageRank, an established and well-known network metric. Based on our theoretical and empirical analysis, we highlight the limitations of standard network metrics and propose a method to overcome them.